行业新闻

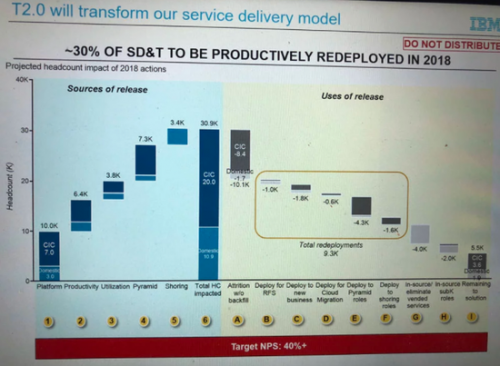

突发!IBM关闭中国研发部门!裁员千人!

关键词:

行业新闻

IBM裁员10000人!

关键词:

行业新闻

IBM 收购红帽:云计算业务再进一步

关键词:

行业新闻

动荡的IBM,超一万人被“自动减员”

关键词:

- 一周热料

- 紧缺物料秒杀

| 型号 | 品牌 | 询价 |

|---|---|---|

| BD71847AMWV-E2 | ROHM Semiconductor | |

| CDZVT2R20B | ROHM Semiconductor | |

| TL431ACLPR | Texas Instruments | |

| RB751G-40T2R | ROHM Semiconductor | |

| MC33074DR2G | onsemi |

| 型号 | 品牌 | 抢购 |

|---|---|---|

| ESR03EZPJ151 | ROHM Semiconductor | |

| TPS63050YFFR | Texas Instruments | |

| BU33JA2MNVX-CTL | ROHM Semiconductor | |

| STM32F429IGT6 | STMicroelectronics | |

| IPZ40N04S5L4R8ATMA1 | Infineon Technologies | |

| BP3621 | ROHM Semiconductor |

热门标签

ROHM

Aavid

Averlogic

开发板

SUSUMU

NXP

PCB

传感器

半导体

资讯排行榜

关于我们

AMEYA360公众号二维码

识别二维码,即可关注

AMEYA360商城(www.ameya360.com)上线于2011年,现有超过3500家优质供应商,收录600万种产品型号数据,100多万种元器件库存可供选购,产品覆盖MCU+存储器+电源芯 片+IGBT+MOS管+运放+射频蓝牙+传感器+电阻电容电感+连接器等多个领域,平台主营业务涵盖电子元器件现货销售、BOM配单及提供产品配套资料等,为广大客户提供一站式购销服务。